What You’ll Find This Week

HELLO {{ FNAME | INNOVATOR }}!

I asked Rick Meekins in a recent podcast about his thoughts on AI, and it led to an interesting idea about evolution… We’ve seen the impacts of technology on human behavior in many other places throughout history (perhaps most recently in search and social media). And I believe we’re on the precipice of another such shift as AI begins to take over.

In this article, and the next few, I’m running with this thought to explore how AI will impact us as humans and our ability to accomplish work. Just what happens when “Don’t make me think” becomes a reality instead of just a mantra?

Here’s what you’ll find:

This Week’s Article: When Thinking Becomes Optional, Reality Becomes a Product

Share This: The Messy Middle Ladder

Don’t Miss Our Latest Podcast

This Week’s Article

When Thinking Becomes Optional, Reality Becomes a Product

Imagine this.

You’re in a meeting, nothing dramatic. Someone asks a basic question.

So, what do we do next?

Julie’s chatbot du-jour has been listening the whole time, and it has an answer ready and waiting. A clean recommendation follows.

Confident tone.

Bullet points.

A plan with a timeline.

The room visibly relaxes.

No one asks the usual annoying follow-ups:

What assumptions did it make? What did it ignore? What would change the recommendation? What would we need to see in the real world to know it’s wrong?

Why would they? The chatbot did the hardest part.

It turned uncertainty into a tidy story.

The Packaged Answer

A system that can turn a messy situation into a neat explanation effectively hands the room a packaged interpretation of reality: what matters, what’s risky, what’s likely, and what to do next.

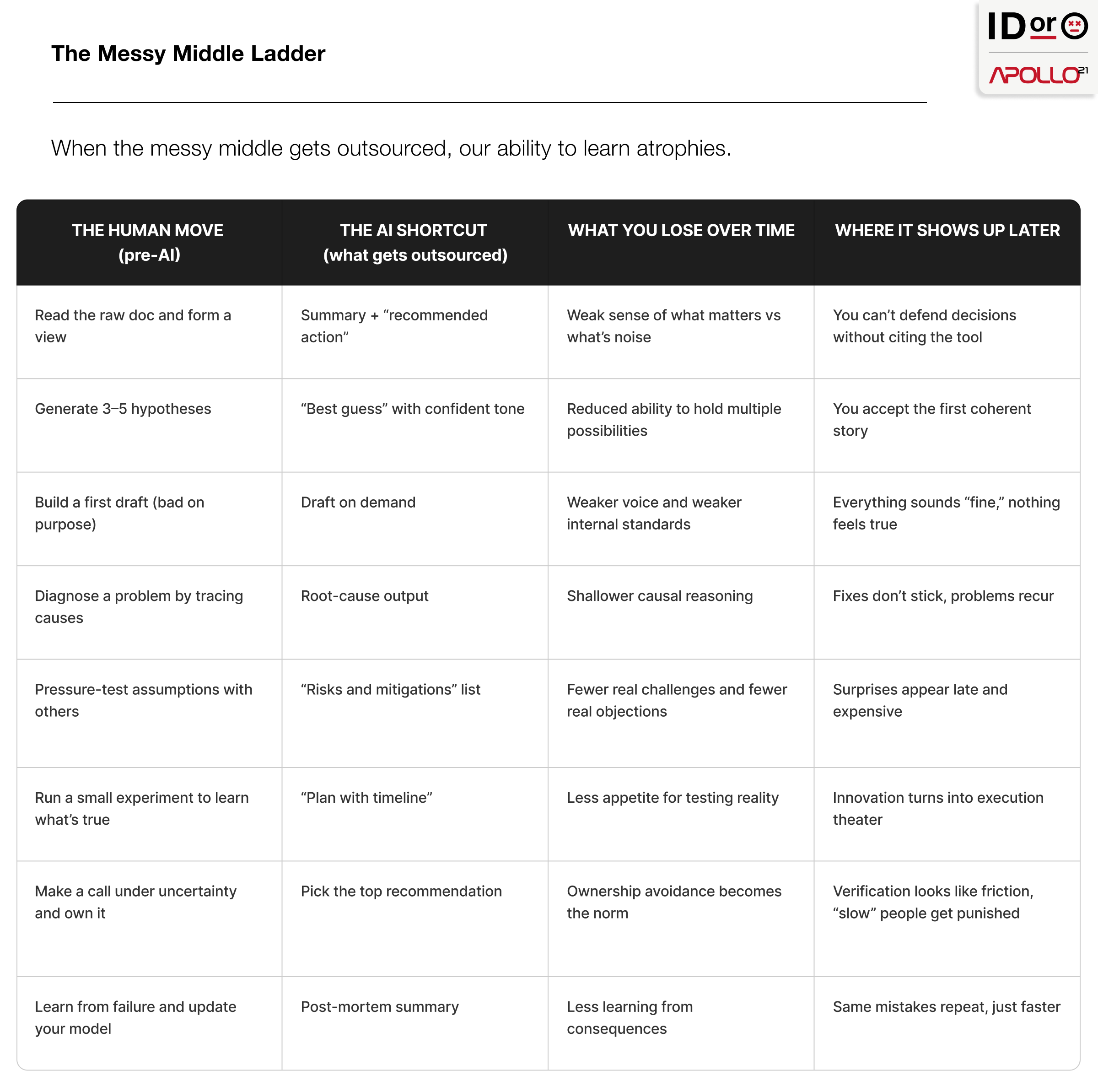

The hard part is sitting in uncertainty long enough to test assumptions, notice what doesn’t fit, and build your own internal model of what’s true. We’re cutting that out and calling it efficiency.

As a result, we’re not asking machines to perform the underlying tasks to accomplish a goal. We’re offloading the act of cognition and outsourcing the final decision-making.

You still get outputs. What you lose is practice.

As humans, we sharpen our thinking through trial and error, and through failure. By thinking through scenarios and trying new solutions over and over again, we learn how to find the edges, the weird cases, the tradeoffs, and the consequences that show up late.

When a system does the messy middle for us, we stop earning the judgment that makes the output useful. Once the output becomes the path of least resistance, the model stops helping us make a decision.

It becomes the decision.

We’ve Already Rehearsed This Pattern

Call it human evolution if you want. (It is.) Not in the slow, genetic sense, but in the fast, biological sense. The brain rewires around what it repeats. Attention follows reward. Memory follows expectation.

We don’t need another moral panic about “this tech is ruining society” to understand what happens when a tool becomes normal. We’ve already lived through two practice shifts.

Search Changed Memory

In the age of Google, humans have stopped storing facts and started storing directions. You don’t remember the answer, you remember how to find it. If you expect the information to be available later, your brain adapts. Your memory system reallocates effort. It also changes what being knowledgeable feels like from the inside.

Feeds Changed Attention

Feeds didn’t just deliver information. They delivered rewards. We have evidence that when teens see “likes,” reward circuitry lights up and the numbers change behavior in real time. Habitual social media checking in early adolescence has been linked to changes over time in neural sensitivity to anticipating social rewards and punishments.

The feed doesn’t just show you content. It trains you. Quick hits beat slow work. Social proof becomes a compass. You learn what gets rewarded, then you learn to want the reward.

Search trained memory strategy. Feeds trained reward strategy. AI is about to train judgment strategy. The question is whether it trains judgment like a muscle, or replaces it like a crutch.

The Step-Change: Memory Offload to Judgment Offload

Looking something up isn't the same as thinking something through.

With search, the tool retrieved. You still had to sift through the results and decide what you believed. You weighed multiple sources, connected dots, and lived in uncertainty long enough to form a view you could defend.

With AI, you don’t just get information. You get interpretation. The system doesn’t hand you raw material. It hands you a finished answer that sounds coherent, often confident, and ready to paste into the next step.

That changes how people make decisions.

Reasoning is a skill you build through repetition. Skip enough reps and you don’t just lose speed. You lose sensitivity. The early warning system that tells you, “Something’s off here.”

And humans don’t treat confident outputs like suggestions. We treat them like permission. That’s why over-trust in automated help shows up again and again across domains.

If you can’t tell when the system is wrong, you’re not using it. You’re deferring to it.

Why the Handoff Happens

The handoff from thinking to accepting is predictable because it offers something humans love: plausible deniability.

Fast outputs move the room forward.

Plausible outputs save effort.

Confident outputs lower social risk.

Everyone can stop arguing and start shipping. But the stickiest benefit is that no one has to own the decision.

If it works, you look decisive. If it fails, you have somewhere to point. “It wasn’t my idea. The machine suggested it.”

Once that escape hatch exists, verifying the output means you’re volunteering to own the outcome. The moment you verify, you can’t hide behind “the machine suggested it” anymore. You chose it.

Verification starts to look like friction. Friction starts to look like incompetence. Soon the person who asks the annoying questions becomes the person who “can’t keep up.”

That’s how we become accepting of these evolutionary changes without even thinking about it. The room learns a new rule: accept the output, move fast, stay safe. Anyone who insists on human judgment looks slow. Eventually the group stops making space for human judgment at all.

The Missing Middle

The first thing AI eats isn’t going to be jobs. AI is coming for your ability to learn.

AI can hand you the finished output so fast that the in-between work disappears. The part where you struggle through uncertainty long enough to form a judgment of your own.

But that’s the part where you learn by doing. The ugly first draft. The second attempt that’s still wrong. The rough analysis that forces you to choose with incomplete information. The first explanation you have to defend, then revise when reality disagrees.

That’s how humans build the learning muscle. You get better because you make calls, see consequences, adjust, and try again. Over time, your brain starts spotting patterns before you can even explain them.

We’re building a system that takes that layer away. The work still gets done, outputs still show up, but the learning muscle stops getting used. It atrophies.

You don’t even notice right away. Everything looks fine until you hit a situation the model hasn’t seen before. Then you find out whether anyone still knows how to reason without it.

And it’s not just individuals. It’s teams. If the messy middle disappears, expertise stops reproducing. You end up with lots of people who can produce outputs and fewer people who can explain why the output is right, what would make it wrong, and what to do when reality doesn’t match the recommendation.

The New Human Edge

Next time you’re asked, “So, what do we do next?” a confident plan will show up before anyone has a chance to think out loud.

That moment is where evolution changes innovation.

If the room can ask a question and receive an answer that looks finished, planned, and time-boxed, the incentive to experiment collapses. Why run a test when the system already sounds certain. Why explore when the output already tells you what matters.

The danger isn’t that the answer is wrong. The danger is that it makes learning feel optional.

Experimentation is how we learn what’s true before we choose. It’s how teams discover constraints they didn’t know existed. It’s how you find the weird case that breaks the neat plan. When you stop doing that work, you don’t just lose rigor. You lose the ability to adapt when the context changes.

And biology follows behavior. The brain rewires around what it repeats. If the repeated motion becomes accepting a packaged answer and shipping it, that becomes what the room gets good at. Curiosity weakens. Skepticism becomes friction. Learning gets treated like delay.

Next, the model stops advising and starts deciding.

Share This